Introduction:

While choosing the right technology for your organization, it’s essential to understand the differences between mainframe vs server. After all, mainframes have been the backbone of large organizations for decades. However, servers have become increasingly popular recently, offering scalability and affordability for many applications.

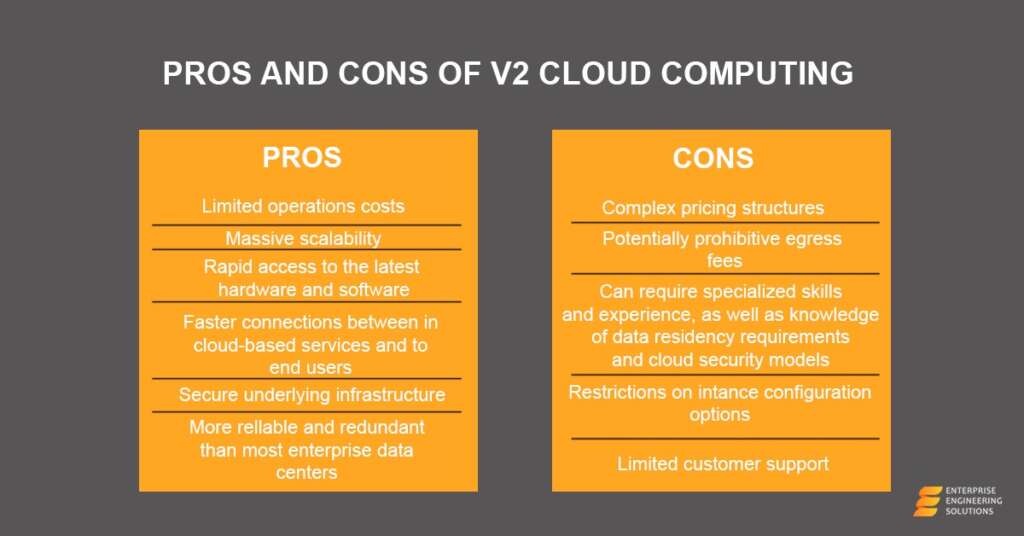

But what exactly sets these two technologies apart, and which is right for your business? Although cloud computing provides businesses with greater scalability, cost savings, flexibility, security, and reliability than traditional mainframes or servers.

In this article, we’ll take a closer look at both techs’ key differences, strengths, and weaknesses to help you make an informed decision.

What is a Server?

A server is a network device that manages access to hardware, software, and other resources while serving as a centralized storage place for programs, data, and information. It may host anything from two to thousands of computers at any time. Accessing data, information, and applications on a server using personal computers or terminals has become easier.

What is a Mainframe?

A mainframe’s data and information can be accessed via servers and other mainframes. However, the increased scalability can only be accessed with cloud services that allow businesses to quickly and easily increase or decrease the resources they use, depending on their needs. Unfortunately, this is not possible with mainframes or servers.

Enterprises can use mainframes to bill millions of consumers, process payroll for thousands of employees, and handle inventory items. According to research, mainframes handle more than 83 percent of global transactions.

Differences Between Mainframe And Server

Continuing the debate on server vs mainframe, these are two of the most important computer systems used in today’s businesses. Both are designed to handle large amounts of data and processing power, but they differ in several ways and have diverse strengths and weaknesses.

| Mainframe | Server | |

|---|---|---|

| Size and Power | Mainframes are large and powerful computers designed to handle heavy workloads. These computers today are about the size of a refrigerator. Mainframes can process massive amounts of data and support thousands of users simultaneously, making them ideal for large organizations with critical applications. | A typical commodity server is physically smaller than a mainframe designed for specific tasks or tasks. They can range in size from a small tower computer to a rack-mounted System. Servers often support specific business functions, such as File and print services, web hosting, and database management. |

| User Capacity | Mainframes are designed to handle many transactions per second, providing fast and reliable access to data. | Servers are known to support fewer users. They are designed to handle a smaller workload but can be scaled up to support more users if necessary. |

| Cost | Mainframes are more expensive than servers in terms of initial investment and ongoing maintenance costs. They require significant hardware, software, and personnel investment to set up and maintain. However, their reliability and security can justify the cost for organizations with critical applications. | Servers are typically less expensive, making them a more cost-saving option for smaller businesses or those with less critical applications. They are easier to set up and maintain and require fewer resources to run. |

| Applications | Mainframes run critical applications, such as financial transactions and airline reservations, where dependability and safety are of utmost importance. They are constructed to handle massive amounts of data exclusively and provide quick and efficient access to information. | The server can be used for various tasks, including file and print services, web hosting, and database management. They are often used to support specific business functions and can be scaled up to cater to the ever-changing business demands. |

| Reliability | Mainframes are known for their high levels of reliability and uptime. They are capable of handling critical applications and providing protected and faster access to data, even during a system failure. They are also equipped with advanced security features to secure sensitive information. | Servers can be less reliable due to their smaller size and limited resources. They can handle different workload levels than mainframes and may provide different levels of reliability and uptime efficiency. |

Suitable Use Of Mainframe Computers in various industries and why?

Once upon a time, the term “mainframe” referred to a massive computer capable of processing enormous workloads. Mainframe is useful for health care, schools, government organizations, energy utilities, manufacturing operations, enterprise resource planning, and online entertainment delivery.

They are well-suited for the Internet of Things (IoT), including PCs, laptops, cellphones, automobiles, security systems, “smart” appliances, and utility grids.

IBM z Systems server control over 90% of the mainframe market. A mainframe computer differs from the x86/ARM hardware we use daily. Modern IBM z Systems servers are far smaller than previous mainframes, albeit they are still significant. They’re tough, durable, secure, and equipped with cutting-edge tech.

The possible reasons for using a mainframe include the following:

- The latest computing style

- Effortless centralized data storage

- Easier resource management

- High-demand mission-critical services

- Robust hot-swap hardware

- Unparalleled security

- High availability

- Secure massive transaction processing

- Efficient backward compatibility with older software

- Massive throughput

- Every component, including the power supply, cooling, backup batteries, CPUs, I/O components, and cryptographic modules, comes with several impressive levels of redundancy.

Mainframe Support Unique Use Cases

Mainframes are unique to be utilized when the commodity server cannot cope. The capacity of a mainframe to handle large numbers of transactions, their high dependability, and support for various workloads make them indispensable in various sectors. The companies may use commodity servers and mainframes, but a mainframe can cover gaps that other servers can’t.

Mainframe Handle Bigdata

According to IBM, the Z13 mainframe can manage 2.5 billion daily transactions. That’s a considerable quantity of data and throughput. To handle big data, you must look for a server upgrade to a mainframe.

It’s challenging to compare directly to the commodity server since the number of transactions they can support varies depending on what’s on the server in question. Furthermore, the sorts of transactions may be vastly different, making it impossible to compare apples to apples.

However, assuming that a typical database on a standard commodity server can handle 300 transactions per second, it works out to roughly 26 million transactions per day, a significant number but nothing near the billions a mainframe can handle.

Mainframes Run Unique Software (Sometimes)

Mainframes are generally driven by mainframe-specific programs written in languages like COBOL, which is a significant differentiating characteristic. They also use proprietary operating systems, such as z/OS. Three important points to ponder are:

- Mainframe workloads cannot be moved to the commodity server.

- You may transfer tasks that typically run on a commodity server to a mainframe.

- Virtualization allows most mainframes to run Linux as well as z/OS.

As a result, the mainframe provides you with the best of both worlds: You’ll have access to a unique set of apps that you won’t find anywhere else and the capacity to manage commodity server workloads.

Mainframe May Save Money If Used Correctly

A single mainframe can cost up to $75,000, considerably more than the two or three thousand dollars a decent x86 server could cost. Of course, this does not imply that the mainframe is more costly and fails to meet your demands or to offer something incredibly extra. Remember, with a $75,000 mainframe, you’ll receive much more processing power than a commodity server.

However, cloud computing is often more cost-efficient than investing in and maintaining expensive mainframes or servers.

Conclusion

Mainframes and servers are high-functioning computer systems with various pros and cons. Organizations with critical applications may benefit from the high reliability and security of mainframes. In contrast, those with less critical applications may find servers a more affordable option. However, the choice between a mainframe and a server will depend on the specific needs of the organization and the applications it needs to run.